Robots to the Rescue

by Danielle Lucey and Brett Davis.png)

Reprinted with permission from AUVSI’s Unmanned Systems Magazine, September 2013

In the future, when disaster strikes, there might be a robot ready to help. That’s the goal of DARPA’s latest pushing-the-envelope contest, the Robotics Challenge, which is seeking how a humanoid robot may engage a disaster scenario. The newest event stands in the shadow of recent disasters tackled by robots, such as the Fukushima recovery, which saw air and ground robots engage in a never-before-tested radioactive environment. The Department of Defense also has a new thrust to better provide relief for natural and manmade catastrophes.

But DARPA is taking the idea of rescue robots to the extreme — by creating a contest centered on using a humanoid, which would be better at navigating a post-disaster environment and using available tools. The agency says this approach will advance the state of supervised autonomy in perception and decision-making, mounted and dismounted mobility, dexterity, strength, and endurance.

DARPA has a history of using challenges to incentivize rapid technology advancements, like its past Grand Challenges and Urban Challenge that advanced automated vehicle technology.

The DARPA Robotics Challenge has three markers for participants, a virtual contest already completed by the teams in June and two live events, the first of which is scheduled for 20-21 Dec. at Homestead-Miami Speedway in Florida. A December 2014 demonstration will follow that culminates with a $2 million prize.

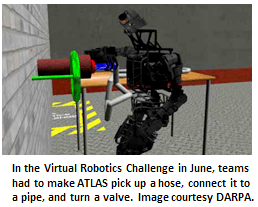

The Virtual Robotics Challenge saw nine software teams move forward that had to demonstrate in a virtual environment that they had created software that would work in an obstacle course in a suburban area.

In a virtual environment, the teams had to make a robot enter, drive and exit a car; walk across a muddy and rubble-filled terrain; and attach a hose to a spigot and turn a valve. The virtual environment was possible through the DARPA-funded Open Source Robotics Foundation, which developed a cloud-based simulator based on the Gazebo software package that would virtually integrate with the humanoid robot being used in the real challenge.

“The VRC and the DARPA simulator allowed us to open the field for the DARPA Robotics Challenge beyond hardware to include experts in robotic software. Integrating both skill sets is vital to the long-term feasibility of robots for disaster response,” says Gill Pratt, Robotics Challenge program manager. “The Virtual Robotics Challenge itself was also a great technical accomplishment, as we have now tested and provided an open-source simulation platform that has the potential to catalyze the robotics and electro-mechanical systems industries by lowering costs to create low-volume, highly complex systems.”

Top of the VRC Heap

The top team in the Virtual Robotics Challenge was Florida’s Institute for Human and Machine Cognition, a not-for-profit research institution that, as its name suggests, investigates how humans and machines can work together. IHMC has investigated biologically inspired robots, natural language interfaces for control, even a system that would let blind users “see” through their tongue’s taste buds.

Dr. Ken Ford, the CEO of IHMC, tells Unmanned Systems that the overall competition is “an exciting challenge that DARPA has given the robotics research community,” one that “will likely lead to significant advances in the state of robotics.”

“Not all such advances can or should be made through competitions,” he said, but “when the time is right and when the circumstances are that competition, coupled with an open sharing of ideas, will likely lead to significant advances, then it does make sense.”

IHMC had 22 people on its team for the VRC. The teams went one at a time to run their software in the simulated environment. “It was a good approach,” Ford says. “If you think about

it from their [DARPA’s] point of view, this VRC phase could be done relatively inexpensively, without commitment to hardware.” IHMC got into the competition, because it’s interested in developing technology that leverages and extends human performance. “In the robotics realm, humanoid robots, robots that are designed to function in the physical built environment, are of interest to us” — and are the point of the overall competition.

The follow-up December 2013 challenge will evaluate teams based on their ability to get the robot to drive a utility vehicle, travel across rubble, remove debris blocking an entryway, open a door and enter a building, climb a ladder and walk across a catwalk, use a power tool to break through a barrier, locate and close a valve of a leaking pipe, and attach an object like a wire harness or a fire hose.

When December rolls around, IHMC’s Ford says, “I hope that this DARPA program ignites the research activity that pushes the field forward. The challenges that DARPA will give the teams are very difficult. If the teams are able to accomplish them, it will be impressive.” The 2014 finals will ask the teams to perform all of those tasks as part of one continuous disaster scenario.

Team Tracks

The challenge has four separate paths teams can choose to participate in the event. The first is Track A, where seven teams were funded to build both hardware and software. These teams went through a critical design review in June to advance to the December trials, where the remaining six teams can use their own robot to compete. Eleven Track B teams received DARPA funding to work on software, while the more than 100 Track C teams received no DARPA funding to use open-source software to tackle the same software tasks. The qualified participants from these two tracks faced off in the Virtual Robotics Challenge and were whittled down to the nine remaining teams.

Those teams, in order of how well they performed in the VRC, are Team IHMC, from Pensacola, Fla.; WPI Robotics Engineering C Squad, from Worcester Polytechnic Institute in Worcester, Mass.; MIT, from Cambridge, Mass.; Team TRACLabs, from TRACLabs Inc. in Webster, Texas; JPL/UCSB/Caltech, from the Jet Propulsion Laboratory in Pasadena, Calif.; Team ViGIR, from TORC Robotics, TU Darmstadt in Germany and Virginia Tech, based out of Blacksburg, Va.; Team K, from Japan; TROOPER, from Lockheed Martin, the University of Pennsylvania and Rensselaer Polytechnic Institute, based in Cherry Hill, N.J.; and Case Western University in Cleveland, Ohio.

DARPA gave the top six teams the advantage of working on at ATLAS robot between now and December, and they are receiving more DARPA funding. The JPL/USBC/Caltech team is also participating as a Track A team and decided to merge its two efforts so DARPA funding for Tracks B and C can stretch to seven teams.

The final track is Track D, which is for non-funded teams that are developing their own hardware and software for trials at the December 2013 event. The best teams from Track D will move onto the finals in December 2014. Preregistration for this track is open through 30 Sept.

TORC has a history of competing in robotics challenges. TORC Robotics has participated in DARPA’s previous Grand and Urban Challenges and also collaborated with fellow team member Virginia Tech in 2011 for the Blind Driver Challenge. The third team member, TU Darmstadt, has worked on the small-scale humanoid RoboCup soccer competitions. “If you look back at TORC, several years ago most of the company’s roots started back with the Intelligent Ground Vehicle Competition and with the DARPA Urban Challenge,” says TORC spokesperson Andrew Culhane. “When that challenge came out, we thought it was far out and far reaching technology. … So looking at it, we wanted to reach forward and be a part of this competition such that seven to 10 years from now, we’re well positioned in this new market.”

TORC Senior Research Scientist David Conner is serving as the principal investigator for this competition. He says the team is focused on high-level behaviors with software and large scale systems integration, which he said is TORC’s traditional focus when it outfits software on more typical ground vehicles.

Team ViGIR is using sliding autonomy to determine how the robot completes a task. “What we’re talking about there is there are different levels of autonomy,” says Conner. “At some points we will command the robot to move its arm to a particular pose. At other points we will just say, ‘Walk to this point,’ and the robot on its onboard processing is doing footstep planning and actually executing those footsteps. At one level you are specifying a task, ‘Move here; pick up that object,’ and on another level it’s more like teleoperation.” The operator can then choose the level of control he is passing off to the robot.

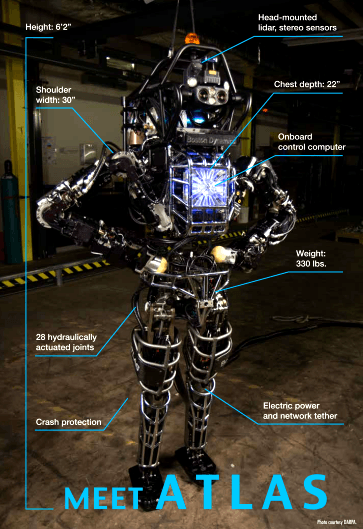

Meet ATLAS

DARPA unveiled the robot Teams B and C would be working with on 8 July. The seven teams will be working on Boston Dynamics’ ATLAS robot, the latest iteration of a line of humanoids the Massachusetts company has been crafting with DARPA’s help.

The robot stands tall at 6 feet 2 inches with 30-inch shoulders and weighs 330 pounds. “We’d seen simulation models of it, but just seeing the size and the mass of the robot — it’s an impressive system,” says TORC’s Conner. “The Virtual Robotics Challenge was a proving ground for teams’ ability to create software to control a robot in a hypothetical scenario. The DRC Simulator tasks were fairly accurate representations of real-world causes and effects, but the experience wasn’t quite the same as handling an actual, physical robot,” says Pratt. “Now these seven teams will see if their simulation-honed algorithms can run a real machine in real environments. And we expect all teams will be further refining their algorithms, using both simulation and experimentation.”

The robot has an onboard, real-time control computer, a hydraulic pump and thermal management, and 28 hydraulically actuated joints. The head of the robot comes courtesy of

Carnegie Robotics and is outfitted with lidar and stereo sensors.

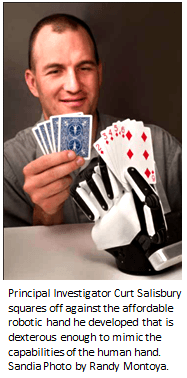

Sandia National Labs and iRobot each contributed a pair of hands the robot uses to grasp objects. Both sets of hands grew out of another DARPA project, called the Autonomous Robotic Manipulation program, which sought out more advanced robotic manipulation systems that required less human interaction and came at a much lower cost.

The hand iRobot came up with uses flexible fingers made out of rubber that lets it bend, twist and conform to objects it is trying to grasp. Since they performed so well at the ARM program, DARPA asked they tweak the hand for the upcoming Robotics Challenge. The three-finger hand comes with tactile sensors on its fingers and palms so it can get a sense of how hard it’s touching a point, within 1 gram of sensitivity. “We’ve enabled that capability by putting as much sensing as we could inside the hand. So it’s up to the software teams to decide what they are trying to grab,” says Mark Claffee, principal robotics engineer at iRobot. Sandia’s hands, which have three fingers and a thumb, are modular, so different fingers can be plugged in for different tasks or tools such as screwdrivers or flashlights can be attached. The hand’s tough outer “skin” covers a layer of gel meant to mimic human tissue, letting it securely grip and hold objects.

Brett Davis is editor and Danielle Lucey is managing editor of Unmanned Systems.